Wuyang Liu

About Me

I am a final-year Ph.D. student from the school of cyber science and engineering, Wuhan University, where I specialize in audio signal analysis and multimodal learning. My academic journey began at Wuhan University, where I earned my Bachelor’s degree in 2020 with a thesis on spoofed speech detection. My research focuses primarily on audio event detection and multimodal learning. Currently, I’m focusing on audio-visual event localization on portrait mode short videos while keeping a keen eye on advancements in Multimodal Large Language Models (MLLMs).

When not immersed in research works, I recharge by strumming my guitar or diving into Dota 2. Check out this page if you’d like to know the human behind the research.

For collaboration or discussion about audio AI, LLM, multimodal systems, or the occasional off-topic thought, feel free to reach out.

Research Interests

My research begins with audio signal processing and spans to multimodal learning. Currently, I’m focusing on:

- Audio-visual event localization on portrait mode short videos.

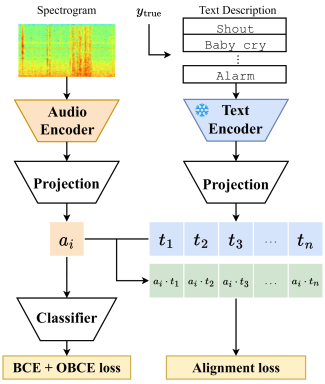

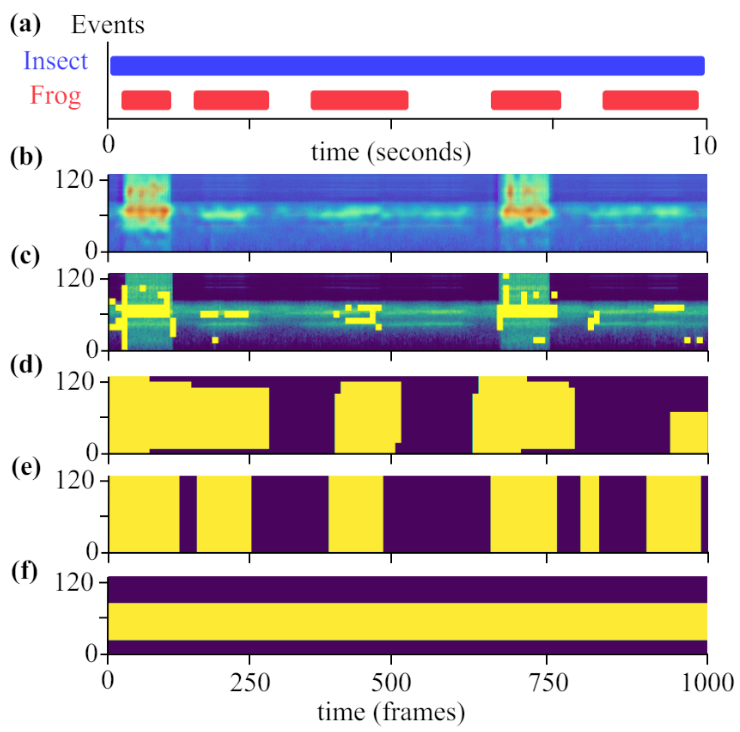

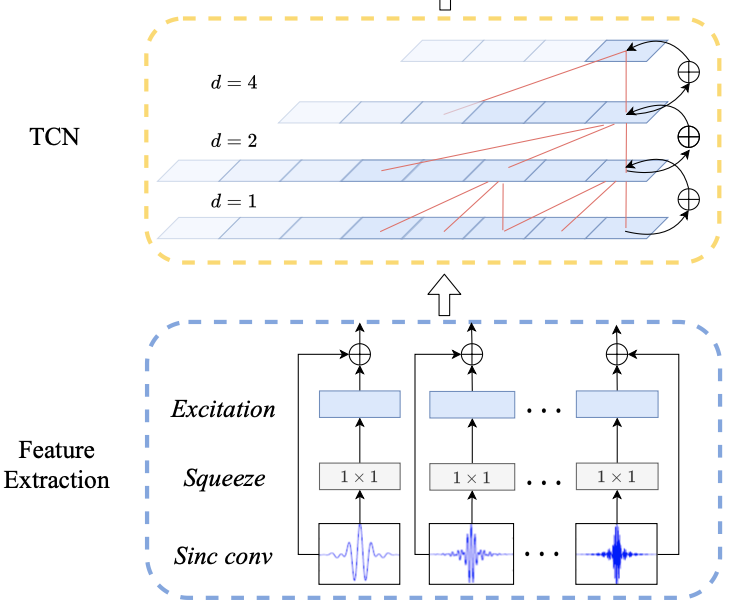

- Audio understanding, especially audio event detection.

- Multimodal Large Language Models (MLLMs).

News

- I am currently looking for job oppotunities for a research position.

- [2025.06] I will join Bytedance @ Beijing as a research intern on MLLMs for graph and sequential data.

- [2025.04] Introducing AVE-PM, the first audio-visual event localization dataset on portrait mode short videos.

- [2024.02] I will be presenting 3 papers in ICASSP 2024, see you at Seoul!

- [2023.11] 2 papers have been accepted by ICASSP 2024.

Publications

-

ICASSP

IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2024.

ICASSP

IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2024. -

ICASSP

IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2023.

ICASSP

IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2023. -

ICIP

IEEE International Conference on Image Processing (ICIP), 2021.

ICIP

IEEE International Conference on Image Processing (ICIP), 2021.

Services

Program Committee Member of IEEE International Conference on Bioinformatics and Biomedicine (BIBM) 2024 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2024-2025 ACM Multimedia, 2024-2025

Powered by Jekyll and Minimal Light theme.